How Product Manager's can get teams to realise improved productivity from GenAI

Why the best product leaders aren't choosing between GenAI tools for speed and human insights, they're mastering both

Your engineering team is generating unit tests with GenAI.

Your designers are prototyping in Figma with the assistance of GenAI prompting.

Your PMs are drafting PRDs at a faster rate than ever in ChatGPT.

But, when you present the quarterly product roadmap to leadership, you're likely to face uncomfortable questions about whether all this GenAI productivity is translating into better products.

I've been living this tension for the past three years, and it's been bloody frustrating.

As someone who spent 20 years building and launching AI/ML products, from product leader to a Level 7 IC at Amazon, and scaling AI/ML products that generated £100M+ value at McKinsey QuantumBlack, HitachiVantara, and now running my own AI product consultancy, I thought I understood how to help teams adopt new technologies to increase productivity.

I was wrong.

The tension is real. What I've discovered, often the hard way, is that I was treating GenAI like just another productivity tool.

In actual fact it's something much more fundamental.

In this position? Let’s unpack it.

COMING END JULY 2025!

If you want to ‘Master Agentic AI product and design’ before your competition…..

……enjoy a 50% discount on the ‘No Spoon Survival Guide’.

The Pattern I started noticing

"The problem isn't GenAI adoption itself. It's that most product leaders are treating GenAI like a productivity tool when it's actually a fundamental shift in how we approach building products."

Here's what I started seeing in teams and the companies I was working with and advising. Brilliant PMs who used to spend hours crafting user stories were generating them in minutes using ChatGPT.

They were moving faster, but I noticed something unsettling. They were losing the deep thinking that used to happen during those hours of crafting. They weren't challenging the GenAI's assumptions about user needs because the output looked professional and sounded plausible.

I realised I was watching a replay of something that had burned me years earlier. This pattern of optimising for speed whilst accidentally sacrificing the reasoning that created value in the first place.

I'd seen it kill projects worth $ millions.

Early in my career at Cambridge Consultants, I spent months debugging why our beautifully engineered medical devices kept failing user testing. The problem wasn't technical. We lacked an optimised development process, which meant we had lost touch with the actual clinical workflow.

"We were building fast, but building the wrong things.

The same dynamic is happening with GenAI in product teams. Whilst PMs are generating market research with ChatGPT, they're not developing the muscle to question whether the insights reflect real user behaviour.

Furthermore, they're taking these insights and creating prototypes with AI prompt assistance, but losing the iterative thinking that comes from manually struggling with design problems.

My challenge as a product educator

As someone who's spent their career translating between technical teams and C-suites, I find myself in an uncomfortable position. I want to educate product managers on how they can utilise GenAI to benefit their teams and clients.

Still, I'm witnessing brilliant people lose the critical thinking that made them excellent product builders in the first place.

GenAI tools aren’t a universal productivity boost, as this YouTube video discusses concerning engineer productivity. They work best for clearly defined tasks, and experienced professionals should use their judgment to know when and when not to rely on them.

For me, the pressure is real when attempting to educate product experts.

It’s not just about how you use GenAI tools to improve product lifecycle productivity; it’s about staying relevant while keeping teams competitive.

Figuring out how to leverage GenAI's capabilities without turning people into content generators is also a challenge. You don’t want them to lose touch with actual user needs.

"The solution isn't to limit GenAI usage. It's to redesign how your team engages with GenAI so they develop 'AI-Augmented Product Judgement.’”

After watching several promising GenAI productivity initiatives fizzle out, not because the technology failed, but because the teams had optimised for speed over insight, I’ve started experimenting with a different approach.

What I eventually figured out

After numerous trials and errors across healthcare, financial services, and enterprise software teams, I stumbled upon an approach that seemed to work.

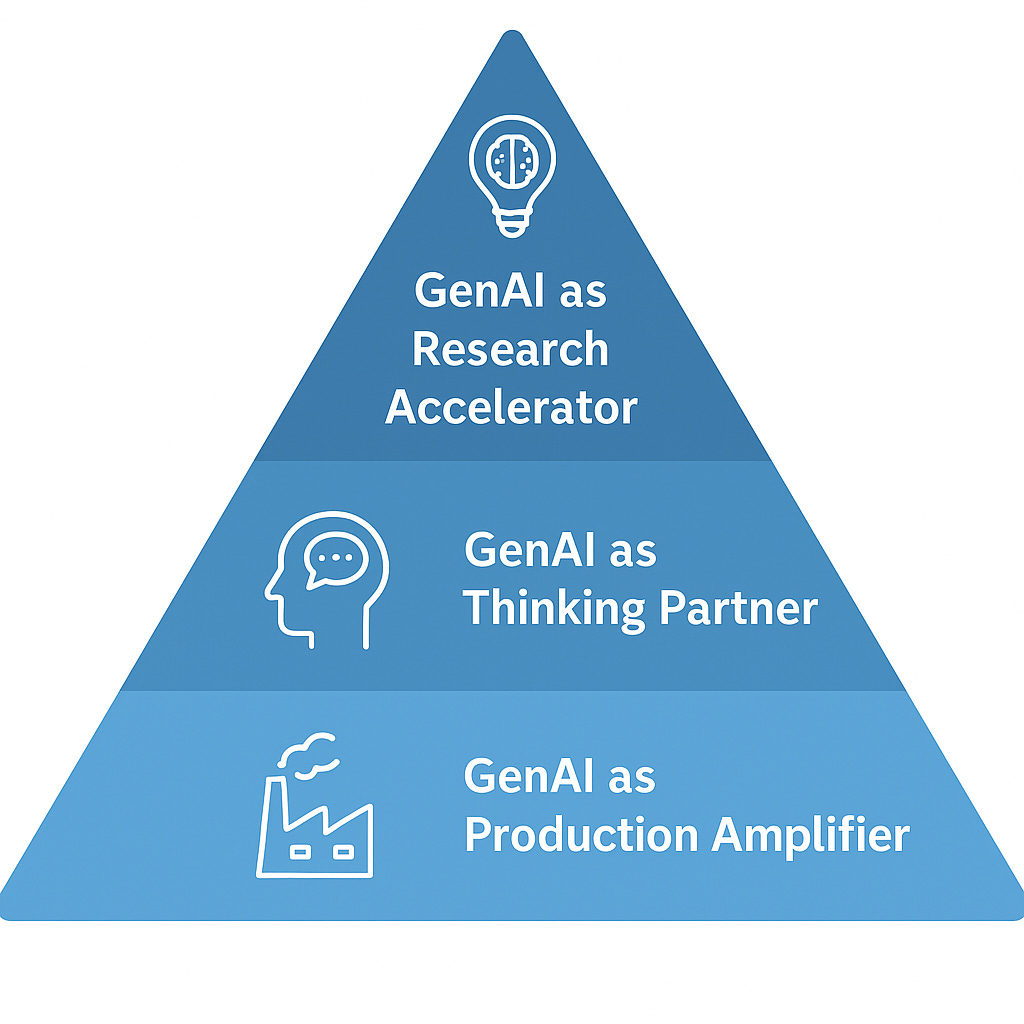

Instead of trying to limit GenAI usage or letting teams run wild with it, I started structuring how we engaged with it in three distinct layers:

Layer 1: GenAI as Research Accelerator

Rather than using GenAI to generate final outputs, we use it to accelerate research. When PMs need market insights, use GenAI to generate 10 hypotheses about user behaviour (after its been fed annoymised findings), then design validation methods to test the top 3.

This fosters critical thinking while leveraging AI's pattern recognition capabilities.

Layer 2: GenAI as Thinking Partner

Training your teams as GenAI challengers, not just a generator. Before finalising any AI-generated content, get them to design prompts with the GenAI to argue against their conclusions.

"What assumptions in this PRD might be wrong?" "

What user needs am I missing in this feature spec?"

It's like having a devil's advocate who never gets tired.

Layer 3: GenAI as Production Amplifier

Once the thinking is solid, use GenAI to scale the execution. For example, generate multiple PRD versions for different stakeholders. Create presentation variants for engineering vs. executive audiences. Build prototype variations to test edge cases.

This approach solves the empathy problem. Instead of replacing human insight, AI has become a tool for developing deeper insights more quickly.

"AI should amplify your team's ability to think deeply about users, not replace that thinking with faster content generation."

How is this connected to my journey?

Looking back on my path from product leader to running AI product initiatives, I realised that the most significant transitions in my career wasn’t about learning new tools; they were about redesigning how I thought about problems.

When I was scaling AI/ML platforms at Sensyne Health from 10,000 to 22.5 million patient records, the technical challenge wasn't the hardest part. The real challenge was maintaining product quality whilst moving at AI speed.

“We were processing medical data that could impact patient outcomes, I couldn't afford to optimise for velocity at the expense of accuracy.”

That's when I first tested what became my standard approach: use AI to accelerate hypothesis generation and validation design, but require human reasoning for all conclusions about patient impact.

It enabled us to move 40% faster than traditional development while maintaining the rigorous thinking that healthcare demands.

I started applying the same principle everywhere, whether building financial software or e-commerce platforms. AI should amplify the team's ability to think deeply about users, not replace that thinking with faster content generation.

What worked in practice

When I started implementing this with teams, I had to figure out the practical details through experimentation.

Here's what ended up working:

Weeks 1-2: Reality Check

Get each PM to document their current AI usage and measure time spent on analysis vs. content generation.

Ask the team to explore how they are primarily using AI for output creation rather than insight development.

Weeks 3-4: Learning to Challenge

Introduce the challenge-prompt methodology.

For every AI-generated insight, provide a follow-up prompt that questions the initial output. "

What evidence would contradict this user insight?" "

What market dynamics am I not considering?"

Weeks 5-8: Testing the Framework

Apply the three-layer approach to a real product initiative. Using GenAI for research acceleration, thinking partnership, and production amplification.

Maintain human ownership of all strategic decisions.

Weeks 9-12: Measuring What Mattered

Track both velocity metrics (E.g., time to PRD completion) and quality metrics (stakeholder feedback on insight depth).

The entire process took about three months to take hold, but the results were well worth it.

Where this has led me, and now you.

Working through this approach didn't just solve my immediate productivity concerns; it changed how executives saw me. I became the person they consulted when they needed to understand how GenAI should change product development.

"You become the person executives consult when they need to understand how AI should actually change product development."

This matters because there's an absolute scarcity of product leaders who can navigate both the technology and the team dynamics effectively. Most are either GenAI sceptics who are falling behind or GenAI enthusiasts who are sacrificing quality for speed.

By developing what I call "GeAI-Augmented Product Judgement", I've been building something that scales across product initiatives. Teams maintain the deep user empathy and critical thinking that creates breakthrough products, while leveraging GenAI to move faster than traditional approaches.

"The teams that master this balance will dominate the next five years of product development. The teams that don't will find themselves producing faster mediocrity whilst their competitors ship AI-augmented breakthroughs."

I'm not sure if I've fully grasped it yet, but I do know that wrestling with these challenges has been the most significant work of my career.

Because the question isn't whether AI will change how we build products, it's whether we'll be intentional about how that change happens.

🙋♂️ Are you stuck in automation tactical decisions or shifting towards strategic GenAI productivity?

Drop your views in the comments.

Let's compare notes.

🔁 Share with a designer or PM still treating agents like chatbots.